Your algorithm has a better configuration. We find it.

HyperOptimizer lets you easily run parallel trials, and shows which configurations perform best (and worst). For trading strategies, ML models, simulations, data pipelines, and custom algorithms.

Best score

0.942

Trials

128

Guardrail

<200ms

Artifacts

12

SECURITY

Your code and data stay under your control.

Optimization often involves sensitive models, proprietary algorithms, private datasets, or internal workflows. HyperOptimizer is designed around containerized execution, isolated trials, and clear boundaries between orchestration, metrics, and workload data.

SETUP

Up and running in minutes, not weeks

Bring a containerized workload, define the search space, and run scalable experiments without assembling infrastructure.

Package your workload

Package your workload as a Docker image and run it with your existing CLI entrypoint. No SDK dependency is required.

Define the search

Define the search inputs and objective. Example:

learning_rate,

batch_size,

and latency constraints.

Start optimizing

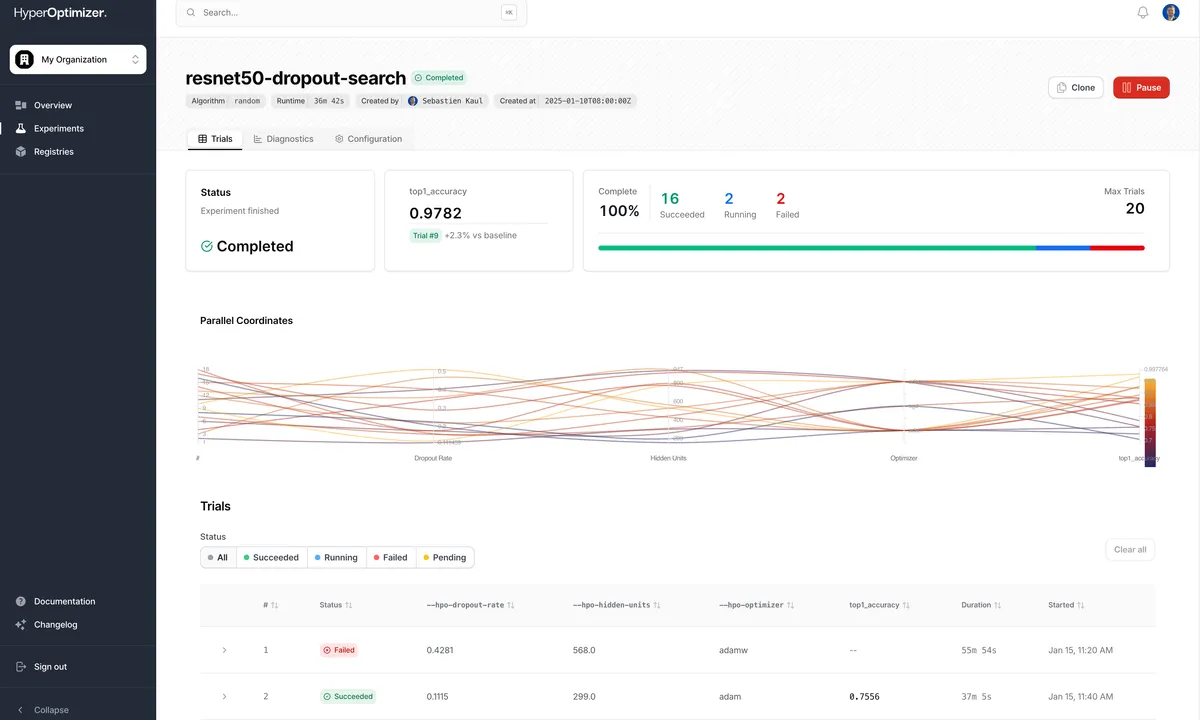

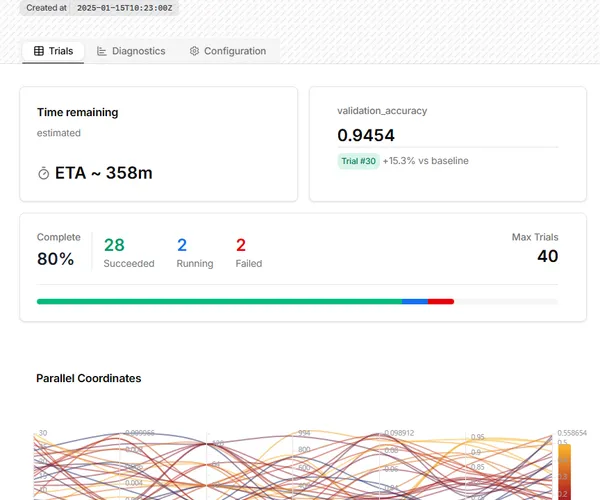

Run parallel trials, monitor progress, and compare outcomes as the best configuration rises to the top.

PRODUCT

From giant search space to best configuration, in one managed run.

Define parameters, run scalable trials, inspect every metric, and keep the configurations that actually perform.

Experiment history

Compare past runs, inspect artifacts, and keep auditable records of which configurations shipped and why.

WHY IT MATTERS

Optimization should not start with infrastructure work.

HyperOptimizer replaces the operational headache around experimentation: orchestration, execution, retries, metrics, logs, and result comparison.

Doing it yourself

Messy, slow, infrastructure-heavy

-

Provision compute

Set up worker nodes and capacity.

-

Configure orchestration

Manage scheduling, autoscaling, and networking.

-

Wire tuning tools

Connect frameworks, storage, and retries.

-

Debug failed jobs

Chase retries, runtime errors, and drift.

-

Collect scattered metrics

Manually stitch logs and outcomes across runs.

With HyperOptimizer

Managed optimization, from image to result

-

Bring your container

Use any Dockerized model, script, strategy, or workload.

-

Define your search space

Set parameters, bounds, and constraints.

-

Choose objective metrics

Optimize for what matters to your workload.

-

Run parallel trials

Launch scalable trials with managed retries.

-

Compare ranked results

Review metrics, logs, artifacts, and winners.

USE CASES

Built to enhance any workload with parameters and metrics.

If it runs in a container and emits results, HyperOptimizer can search for better configurations.

Machine learning

Tune training parameters, preprocessing, model settings, and evaluation metrics.

Quant research

Optimize strategy parameters, risk settings, entry/exit logic, and backtest configurations.

Simulations

Search large configuration spaces for engineering, scientific, Monte Carlo, or agent-based experiments.

Data pipelines

Tune batch sizes, parallelism, memory limits, indexing settings, and processing behavior.

AI agents / LLM workflows

Optimize prompts, retrieval settings, routing logic, thresholds, and tool parameters.

Custom algorithms

Run black-box optimization for any script, service, or algorithm that reports metrics.

WORKFLOW

How HyperOptimizer works

1. Package your workload

Bring a Docker image or containerized job.

2. Define the experiment

Set parameters, ranges, objectives, constraints, and budgets.

3. Run scalable trials

HyperOptimizer schedules, monitors, retries, and captures results.

4. Compare outcomes

Rank configurations by metrics, logs, artifacts, cost, and custom scores.

FAQ

Frequently asked questions

Can't find the answer you're looking for? Reach out to our support team.

Is HyperOptimizer only for trading?

No. Trading strategies are one use case. HyperOptimizer is designed for any containerized workload with parameters, metrics, and an objective.

How is this different from Optuna, Ray Tune, or Katib?

Those are powerful optimization frameworks. HyperOptimizer provides the managed infrastructure, orchestration, trial execution, metric collection, dashboard, and workflow around the optimization process.

What do I need to use it?

A container: a containerized workload, a search space, and one or more metrics to optimize.

Can I use custom metrics?

Yes. HyperOptimizer is metric-agnostic: optimize for accuracy, return, drawdown, latency, throughput, token cost, runtime, or any custom score you report.

Does my workload need to be machine learning?

No. Workloads can be ML models, trading strategies, simulations, data pipelines, LLM workflows, or any custom algorithm that can run in a container and emit metrics.

Can I run private workloads?

HyperOptimizer is designed for controlled environments with containerized, isolated trial execution. It supports private images and keeps workload execution separated from orchestration and experiment metadata.

Do you support private Docker images?

Yes. You can run private container images as part of optimization workflows, with access and execution controlled by your environment configuration.

Can I control compute limits and budgets?

Yes. Experiments can be configured with resource limits, trial budgets, and runtime constraints so optimization stays within operational boundaries.

Do you support parallel optimization?

Yes. Multiple trials run in parallel, and scheduling adapts as results come in so experiments converge faster.

What happens if a trial fails or times out?

Each trial runs independently. Failed trials are surfaced in the dashboard with logs and status, and remaining trials continue based on your configured budget.

Stop guessing configurations. Start running better experiments.

Join the waitlist for managed optimization infrastructure built for models, strategies, simulations, pipelines, and custom algorithms. Try the platform, share your feedback, and help shape the future of optimization workflows. Want us to support your framework or stack? Let us know.